hello world

This commit is contained in:

commit

c99507ca1e

84 changed files with 54252 additions and 0 deletions

478

README.md

Normal file

478

README.md

Normal file

|

|

@ -0,0 +1,478 @@

|

||||||

|

# IMPORTANT NOTICE - UPDATE

|

||||||

|

|

||||||

|

This repository does not hold a copy of the proprietary Claude Code typescript source code.

|

||||||

|

This is a clean-room Rust reimplementation of Claude Code's behavior.

|

||||||

|

|

||||||

|

The process was explicitly two-phase:

|

||||||

|

|

||||||

|

Specification [`spec/`](https://github.com/kuberwastaken/claude-code/tree/main/spec) - An AI agent analyzed the source and produced exhaustive behavioral specifications and improvements, deviated from the original: architecture, data flows, tool contracts, system designs. No source code was carried forward.

|

||||||

|

|

||||||

|

Implementation [`src/`](https://github.com/kuberwastaken/claude-code/tree/main/src-rust)- A separate AI agent implemented from the spec alone, never referencing the original TypeScript. The output is idiomatic Rust that reproduces the behavior, not the expression.

|

||||||

|

|

||||||

|

This mirrors the legal precedent established by Phoenix Technologies v. IBM (1984) — clean-room engineering of the BIOS — and the principle from Baker v. Selden (1879) that copyright protects expression, not ideas or behavior.

|

||||||

|

|

||||||

|

The analysis below is commentary on publicly available software, protected under fair use (17 U.S.C. § 107). Code excerpts are quoted to illustrate technical points from a public source - no unauthorized access was involved in this process or research.

|

||||||

|

|

||||||

|

# Claude Code's Entire Source Code Got Leaked via a Sourcemap in npm, Let's Talk About It

|

||||||

|

|

||||||

|

## Technical Breakdown

|

||||||

|

|

||||||

|

> **PS:** I've also published this [breakdown on my blog](https://kuber.studio/blog/AI/Claude-Code's-Entire-Source-Code-Got-Leaked-via-a-Sourcemap-in-npm,-Let's-Talk-About-it) with a better reading experience and UX :)

|

||||||

|

|

||||||

|

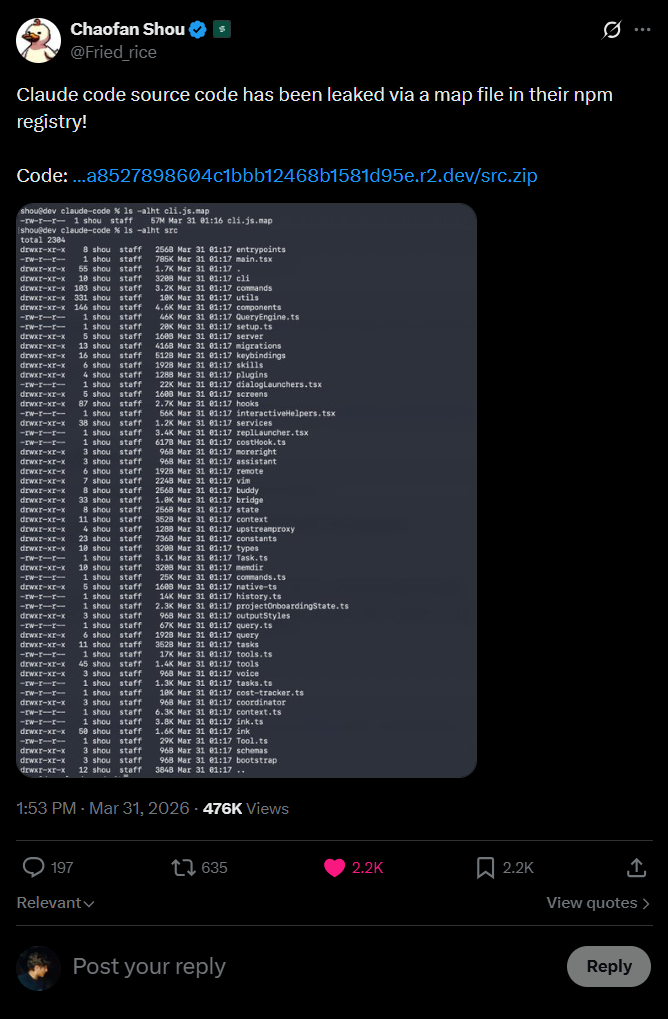

Earlier today (March 31st, 2026) - Chaofan Shou on X discovered something that Anthropic probably didn't want the world to see: the **entire source code** of Claude Code, Anthropic's official AI coding CLI, was sitting in plain sight on the npm registry via a sourcemap file bundled into the published package.

|

||||||

|

|

||||||

|

[](https://raw.githubusercontent.com/kuberwastaken/claude-code/main/public/leak-tweet.png)

|

||||||

|

|

||||||

|

This repository is a backup of that leaked source, and this README is a full breakdown of what's in it, how the leak happened and most importantly, the things we now know that were never meant to be public.

|

||||||

|

|

||||||

|

Let's get into it.

|

||||||

|

|

||||||

|

## How Did This Even Happen?

|

||||||

|

|

||||||

|

This is the part that honestly made me go "...really?"

|

||||||

|

|

||||||

|

When you publish a JavaScript/TypeScript package to npm, the build toolchain often generates **source map files** (`.map` files). These files are a bridge between the minified/bundled production code and the original source, they exist so that when something crashes in production the stack trace can point you to the *actual* line of code in the *original* file, not some unintelligible line 1, column 48293 of a minified blob.

|

||||||

|

|

||||||

|

But the fun part is **source maps contain the original source code**. The actual, literal, raw source code, embedded as strings inside a JSON file.

|

||||||

|

|

||||||

|

The structure of a `.map` file looks something like this:

|

||||||

|

|

||||||

|

```json

|

||||||

|

{

|

||||||

|

"version": 3,

|

||||||

|

"sources": ["../src/main.tsx", "../src/tools/BashTool.ts", "..."],

|

||||||

|

"sourcesContent": ["// The ENTIRE original source code of each file", "..."],

|

||||||

|

"mappings": "AAAA,SAAS,OAAO..."

|

||||||

|

}

|

||||||

|

```

|

||||||

|

|

||||||

|

That `sourcesContent` array? That's everything.

|

||||||

|

Every file. Every comment. Every internal constant. Every system prompt. All of it, sitting right there in a JSON file that npm happily serves to anyone who runs `npm pack` or even just browses the package contents.

|

||||||

|

|

||||||

|

This is not a novel attack vector. It's happened before and honestly it'll happen again.

|

||||||

|

|

||||||

|

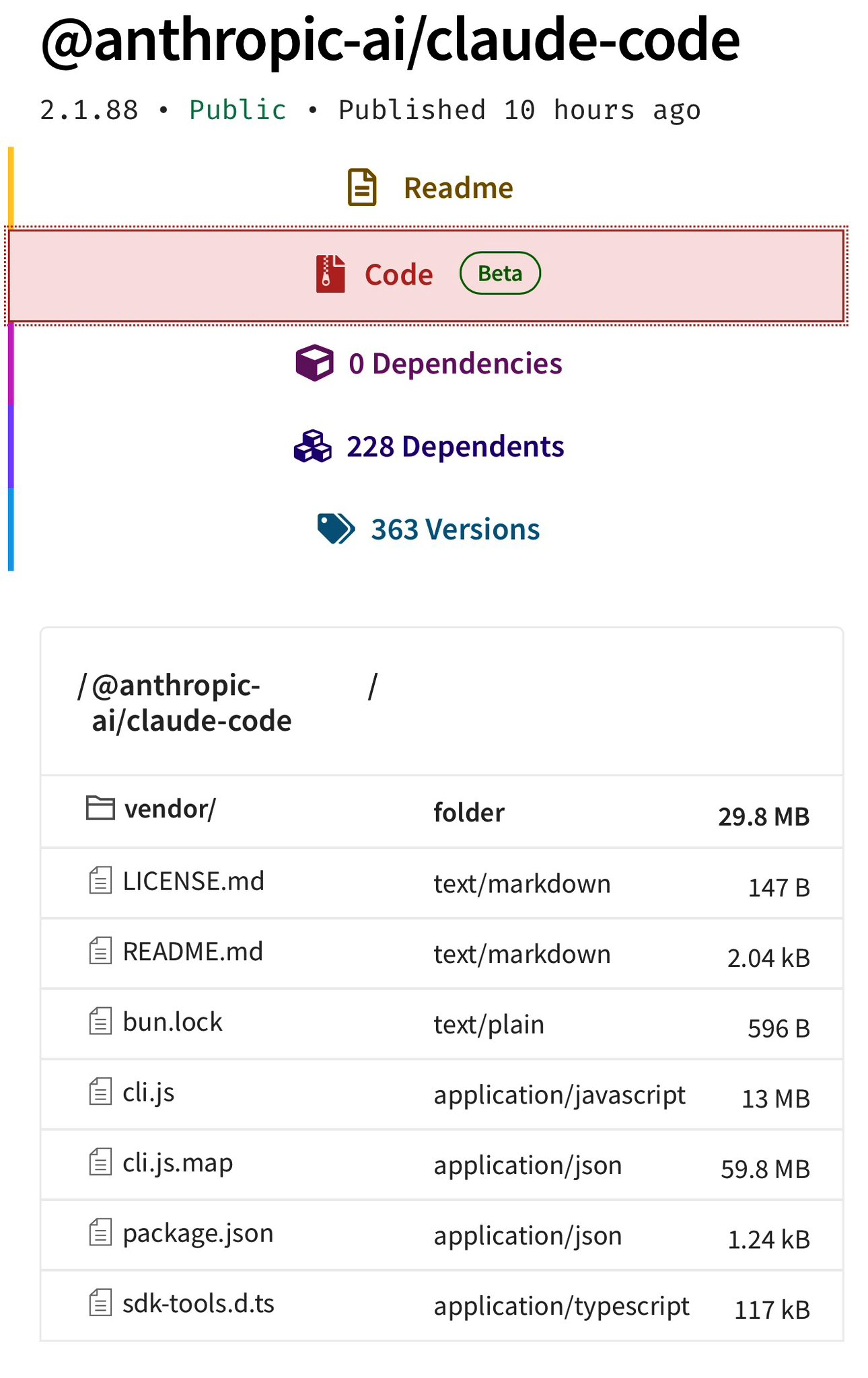

The mistake is almost always the same: someone forgets to add `*.map` to their `.npmignore` or doesn't configure their bundler to skip source map generation for production builds. With Bun's bundler (which Claude Code uses), source maps are generated by default unless you explicitly turn them off.

|

||||||

|

|

||||||

|

[](https://raw.githubusercontent.com/kuberwastaken/claude-code/main/public/claude-files.png)

|

||||||

|

|

||||||

|

The funniest part is, there's an entire system called ["Undercover Mode"](#undercover-mode--do-not-blow-your-cover) specifically designed to prevent Anthropic's internal information from leaking.

|

||||||

|

|

||||||

|

They built a whole subsystem to stop their AI from accidentally revealing internal codenames in git commits... and then shipped the entire source in a `.map` file, likely by Claude.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## What's Claude Under The Hood?

|

||||||

|

|

||||||

|

If you've been living under a rock, Claude Code is Anthropic's official CLI tool for coding with Claude and the most popular AI coding agent.

|

||||||

|

|

||||||

|

From the outside, it looks like a polished but relatively simple CLI.

|

||||||

|

|

||||||

|

From the inside, It's a **785KB [`main.tsx`](https://github.com/kuberwastaken/claude-code/blob/main/src-rust/crates/cli/src/main.rs)** entry point, a custom React terminal renderer, 40+ tools, a multi-agent orchestration system, a background memory consolidation engine called "dream," and much more

|

||||||

|

|

||||||

|

Enough yapping, here's some parts about the source code that are genuinely cool that I found after an afternoon deep dive:

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## BUDDY - A Tamagotchi Inside Your Terminal

|

||||||

|

|

||||||

|

I am not making this up.

|

||||||

|

|

||||||

|

Claude Code has a full **Tamagotchi-style companion pet system** called "Buddy." A **deterministic gacha system** with species rarity, shiny variants, procedurally generated stats, and a soul description written by Claude on first hatch like OpenClaw.

|

||||||

|

|

||||||

|

The entire thing lives in [`buddy/`](https://github.com/kuberwastaken/claude-code/tree/main/src-rust/crates) and is gated behind the `BUDDY` compile-time feature flag.

|

||||||

|

|

||||||

|

### The Gacha System

|

||||||

|

|

||||||

|

Your buddy's species is determined by a **Mulberry32 PRNG**, a fast 32-bit pseudo-random number generator seeded from your `userId` hash with the salt `'friend-2026-401'`:

|

||||||

|

|

||||||

|

```typescript

|

||||||

|

// Mulberry32 PRNG - deterministic, reproducible per-user

|

||||||

|

function mulberry32(seed: number): () => number {

|

||||||

|

return function() {

|

||||||

|

seed |= 0; seed = seed + 0x6D2B79F5 | 0;

|

||||||

|

var t = Math.imul(seed ^ seed >>> 15, 1 | seed);

|

||||||

|

t = t + Math.imul(t ^ t >>> 7, 61 | t) ^ t;

|

||||||

|

return ((t ^ t >>> 14) >>> 0) / 4294967296;

|

||||||

|

}

|

||||||

|

}

|

||||||

|

```

|

||||||

|

|

||||||

|

Same user always gets the same buddy.

|

||||||

|

|

||||||

|

### 18 Species (Obfuscated in Code)

|

||||||

|

|

||||||

|

The species names are hidden via `String.fromCharCode()` arrays - Anthropic clearly didn't want these showing up in string searches. Decoded, the full species list is:

|

||||||

|

|

||||||

|

| Rarity | Species |

|

||||||

|

|--------|---------|

|

||||||

|

| **Common** (60%) | Pebblecrab, Dustbunny, Mossfrog, Twigling, Dewdrop, Puddlefish |

|

||||||

|

| **Uncommon** (25%) | Cloudferret, Gustowl, Bramblebear, Thornfox |

|

||||||

|

| **Rare** (10%) | Crystaldrake, Deepstag, Lavapup |

|

||||||

|

| **Epic** (4%) | Stormwyrm, Voidcat, Aetherling |

|

||||||

|

| **Legendary** (1%) | Cosmoshale, Nebulynx |

|

||||||

|

|

||||||

|

On top of that, there's a **1% shiny chance** completely independent of rarity. So a Shiny Legendary Nebulynx has a **0.01%** chance of being rolled. Dang.

|

||||||

|

|

||||||

|

### Stats, Eyes, Hats, and Soul

|

||||||

|

|

||||||

|

Each buddy gets procedurally generated:

|

||||||

|

- **5 stats**: `DEBUGGING`, `PATIENCE`, `CHAOS`, `WISDOM`, `SNARK` (0-100 each)

|

||||||

|

- **6 possible eye styles** and **8 hat options** (some gated by rarity)

|

||||||

|

- **A "soul"** as mentioned, the personality generated by Claude on first hatch, written in character

|

||||||

|

|

||||||

|

The sprites are rendered as **5-line-tall, 12-character-wide ASCII art** with multiple animation frames. There are idle animations, reaction animations, and they sit next to your input prompt.

|

||||||

|

|

||||||

|

### The Lore

|

||||||

|

|

||||||

|

The code references April 1-7, 2026 as a **teaser window** (so probably for easter?), with a full launch gated for May 2026. The companion has a system prompt that tells Claude:

|

||||||

|

|

||||||

|

```

|

||||||

|

A small {species} named {name} sits beside the user's input box and

|

||||||

|

occasionally comments in a speech bubble. You're not {name} - it's a

|

||||||

|

separate watcher.

|

||||||

|

```

|

||||||

|

|

||||||

|

So it's not just cosmetic - the buddy has its own personality and can respond when addressed by name. I really do hope they ship it.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## KAIROS - "Always-On Claude"

|

||||||

|

|

||||||

|

Inside [`assistant/`](https://github.com/kuberwastaken/claude-code/tree/main/src-rust/crates), there's an entire mode called **KAIROS** i.e. a persistent, always-running Claude assistant that doesn't wait for you to type. It watches, logs, and **proactively** acts on things it notices.

|

||||||

|

|

||||||

|

This is gated behind the `PROACTIVE` / `KAIROS` compile-time feature flags and is completely absent from external builds.

|

||||||

|

|

||||||

|

### How It Works

|

||||||

|

|

||||||

|

KAIROS maintains **append-only daily log files** - it writes observations, decisions, and actions throughout the day. On a regular interval, it receives `<tick>` prompts that let it decide whether to act proactively or stay quiet.

|

||||||

|

|

||||||

|

The system has a **15-second blocking budget**, any proactive action that would block the user's workflow for more than 15 seconds gets deferred. This is Claude trying to be helpful without being annoying.

|

||||||

|

|

||||||

|

### Brief Mode

|

||||||

|

|

||||||

|

When KAIROS is active, there's a special output mode called **Brief**, extremely concise responses designed for a persistent assistant that shouldn't flood your terminal. Think of it as the difference between a chatty friend and a professional assistant who only speaks when they have something valuable to say.

|

||||||

|

|

||||||

|

### Exclusive Tools

|

||||||

|

|

||||||

|

KAIROS gets tools that regular Claude Code doesn't have:

|

||||||

|

|

||||||

|

| Tool | What It Does |

|

||||||

|

|------|-------------|

|

||||||

|

| **SendUserFile** | Push files directly to the user (notifications, summaries) |

|

||||||

|

| **PushNotification** | Send push notifications to the user's device |

|

||||||

|

| **SubscribePR** | Subscribe to and monitor pull request activity |

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## ULTRAPLAN - 30-Minute Remote Planning Sessions

|

||||||

|

|

||||||

|

Here's one that's wild from an infrastructure perspective.

|

||||||

|

|

||||||

|

**ULTRAPLAN** is a mode where Claude Code offloads a complex planning task to a **remote Cloud Container Runtime (CCR) session** running **Opus 4.6**, gives it up to **30 minutes** to think, and lets you approve the result from your browser.

|

||||||

|

|

||||||

|

The basic flow:

|

||||||

|

|

||||||

|

1. Claude Code identifies a task that needs deep planning

|

||||||

|

2. It spins up a remote CCR session via the `tengu_ultraplan_model` config

|

||||||

|

3. Your terminal shows a polling state - checking every **3 seconds** for the result

|

||||||

|

4. Meanwhile, a browser-based UI lets you watch the planning happen and approve/reject it

|

||||||

|

5. When approved, there's a special sentinel value `__ULTRAPLAN_TELEPORT_LOCAL__` that "teleports" the result back to your local terminal

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## The "Dream" System - Claude Literally Dreams

|

||||||

|

|

||||||

|

Okay this is genuinely one of the coolest things in here.

|

||||||

|

|

||||||

|

Claude Code has a system called **autoDream** ([`services/autoDream/`](https://github.com/kuberwastaken/claude-code/tree/main/src-rust/crates)) - a background memory consolidation engine that runs as a **forked subagent**. The naming is very intentional. It's Claude... dreaming.

|

||||||

|

|

||||||

|

This is extremely funny because [I had the same idea for LITMUS last week - OpenClaw subagents creatively having leisure time to find fun new papers](https://github.com/Kuberwastaken/litmus)

|

||||||

|

|

||||||

|

### The Three-Gate Trigger

|

||||||

|

|

||||||

|

The dream doesn't just run whenever it feels like it. It has a **three-gate trigger system**:

|

||||||

|

|

||||||

|

1. **Time gate**: 24 hours since last dream

|

||||||

|

2. **Session gate**: At least 5 sessions since last dream

|

||||||

|

3. **Lock gate**: Acquires a consolidation lock (prevents concurrent dreams)

|

||||||

|

|

||||||

|

All three must pass. This prevents both over-dreaming and under-dreaming.

|

||||||

|

|

||||||

|

### The Four Phases

|

||||||

|

|

||||||

|

When it runs, the dream follows four strict phases from the prompt in [`consolidationPrompt.ts`](https://github.com/kuberwastaken/claude-code/blob/main/src-rust/crates/query/src/compact.rs):

|

||||||

|

|

||||||

|

**Phase 1 - Orient**: `ls` the memory directory, read `MEMORY.md`, skim existing topic files to improve.

|

||||||

|

|

||||||

|

**Phase 2 - Gather Recent Signal**: Find new information worth persisting. Sources in priority: daily logs → drifted memories → transcript search.

|

||||||

|

|

||||||

|

**Phase 3 - Consolidate**: Write or update memory files. Convert relative dates to absolute. Delete contradicted facts.

|

||||||

|

|

||||||

|

**Phase 4 - Prune and Index**: Keep `MEMORY.md` under 200 lines AND ~25KB. Remove stale pointers. Resolve contradictions.

|

||||||

|

|

||||||

|

The prompt literally says:

|

||||||

|

|

||||||

|

> *"You are performing a dream - a reflective pass over your memory files. Synthesize what you've learned recently into durable, well-organized memories so that future sessions can orient quickly."*

|

||||||

|

|

||||||

|

The dream subagent gets **read-only bash** - it can look at your project but not modify anything. It's purely a memory consolidation pass.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Undercover Mode - "Do Not Blow Your Cover"

|

||||||

|

|

||||||

|

|

||||||

|

This one is fascinating from a corporate strategy perspective.

|

||||||

|

|

||||||

|

Anthropic employees (identified by `USER_TYPE === 'ant'`) use Claude Code on public/open-source repositories. **Undercover Mode** ([`utils/undercover.ts`](https://github.com/kuberwastaken/claude-code/blob/main/src-rust/crates/core/src/lib.rs)) prevents the AI from accidentally revealing internal information in commits and PRs.

|

||||||

|

|

||||||

|

When active, it injects this into the system prompt:

|

||||||

|

|

||||||

|

```

|

||||||

|

## UNDERCOVER MODE - CRITICAL

|

||||||

|

|

||||||

|

You are operating UNDERCOVER in a PUBLIC/OPEN-SOURCE repository. Your commit

|

||||||

|

messages, PR titles, and PR bodies MUST NOT contain ANY Anthropic-internal

|

||||||

|

information. Do not blow your cover.

|

||||||

|

|

||||||

|

NEVER include in commit messages or PR descriptions:

|

||||||

|

- Internal model codenames (animal names like Capybara, Tengu, etc.)

|

||||||

|

- Unreleased model version numbers (e.g., opus-4-7, sonnet-4-8)

|

||||||

|

- Internal repo or project names

|

||||||

|

- Internal tooling, Slack channels, or short links (e.g., go/cc, #claude-code-…)

|

||||||

|

- The phrase "Claude Code" or any mention that you are an AI

|

||||||

|

- Co-Authored-By lines or any other attribution

|

||||||

|

```

|

||||||

|

|

||||||

|

The activation logic:

|

||||||

|

- `CLAUDE_CODE_UNDERCOVER=1` forces it ON (even in internal repos)

|

||||||

|

- Otherwise it's **automatic**: active UNLESS the repo remote matches an internal allowlist

|

||||||

|

- There is **NO force-OFF** - *"if we're not confident we're in an internal repo, we stay undercover."*

|

||||||

|

|

||||||

|

So this confirms:

|

||||||

|

1. **Anthropic employees actively use Claude Code to contribute to open-source** - and the AI is told to hide that it's an AI

|

||||||

|

2. **Internal model codenames are animal names** - Capybara, Tengu, etc.

|

||||||

|

3. **"Tengu"** appears hundreds of times as a prefix for feature flags and analytics events - it's almost certainly **Claude Code's internal project codename**

|

||||||

|

|

||||||

|

All of this is dead-code-eliminated from external builds. But source maps don't care about dead code elimination.

|

||||||

|

|

||||||

|

Makes me wonder how much are they internally causing havoc to open source repos

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Multi-Agent Orchestration - "Coordinator Mode"

|

||||||

|

|

||||||

|

|

||||||

|

Claude Code has a full **multi-agent orchestration system** in [`coordinator/`](https://github.com/kuberwastaken/claude-code/tree/main/src-rust/crates/query/src), activated via `CLAUDE_CODE_COORDINATOR_MODE=1`.

|

||||||

|

|

||||||

|

When enabled, Claude Code transforms from a single agent into a **coordinator** that spawns, directs, and manages multiple worker agents in parallel. The coordinator system prompt in [`coordinatorMode.ts`](https://github.com/kuberwastaken/claude-code/blob/main/src-rust/crates/query/src/agent_tool.rs) is a masterclass in multi-agent design:

|

||||||

|

|

||||||

|

| Phase | Who | Purpose |

|

||||||

|

|-------|-----|---------|

|

||||||

|

| **Research** | Workers (parallel) | Investigate codebase, find files, understand problem |

|

||||||

|

| **Synthesis** | **Coordinator** | Read findings, understand the problem, craft specs |

|

||||||

|

| **Implementation** | Workers | Make targeted changes per spec, commit |

|

||||||

|

| **Verification** | Workers | Test changes work |

|

||||||

|

|

||||||

|

The prompt **explicitly** teaches parallelism:

|

||||||

|

|

||||||

|

> *"Parallelism is your superpower. Workers are async. Launch independent workers concurrently whenever possible - don't serialize work that can run simultaneously."*

|

||||||

|

|

||||||

|

Workers communicate via `<task-notification>` XML messages. There's a shared **scratchpad directory** (gated behind `tengu_scratch`) for cross-worker durable knowledge sharing. And the prompt has this gem banning lazy delegation:

|

||||||

|

|

||||||

|

> *Do NOT say "based on your findings" - read the actual findings and specify exactly what to do.*

|

||||||

|

|

||||||

|

The system also includes **Agent Teams/Swarm** capabilities (`tengu_amber_flint` feature gate) with in-process teammates using `AsyncLocalStorage` for context isolation, process-based teammates using tmux/iTerm2 panes, team memory synchronization, and color assignments for visual distinction.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Fast Mode is Internally Called "Penguin Mode"

|

||||||

|

|

||||||

|

Yeah, they really called it Penguin Mode. The API endpoint in [`utils/fastMode.ts`](https://github.com/kuberwastaken/claude-code/blob/main/src-rust/crates/core/src/lib.rs) is literally:

|

||||||

|

|

||||||

|

```typescript

|

||||||

|

const endpoint = `${getOauthConfig().BASE_API_URL}/api/claude_code_penguin_mode`

|

||||||

|

```

|

||||||

|

|

||||||

|

The config key is `penguinModeOrgEnabled`. The kill-switch is `tengu_penguins_off`. The analytics event on failure is `tengu_org_penguin_mode_fetch_failed`. Penguins all the way down.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## The System Prompt Architecture

|

||||||

|

|

||||||

|

The system prompt isn't a single string like most apps have - it's built from **modular, cached sections** composed at runtime in [`constants/`](https://github.com/kuberwastaken/claude-code/tree/main/src-rust/crates/core/src).

|

||||||

|

|

||||||

|

The architecture uses a `SYSTEM_PROMPT_DYNAMIC_BOUNDARY` marker that splits the prompt into:

|

||||||

|

- **Static sections** - cacheable across organizations (things that don't change per user)

|

||||||

|

- **Dynamic sections** - user/session-specific content that breaks cache when changed

|

||||||

|

|

||||||

|

There's a function called `DANGEROUS_uncachedSystemPromptSection()` for volatile sections you explicitly want to break cache. The naming convention alone tells you someone learned this lesson the hard way.

|

||||||

|

|

||||||

|

### The Cyber Risk Instruction

|

||||||

|

|

||||||

|

One particularly interesting section is the `CYBER_RISK_INSTRUCTION` in [`constants/cyberRiskInstruction.ts`](https://github.com/kuberwastaken/claude-code/blob/main/src-rust/crates/core/src/lib.rs), which has a massive warning header:

|

||||||

|

|

||||||

|

```

|

||||||

|

IMPORTANT: DO NOT MODIFY THIS INSTRUCTION WITHOUT SAFEGUARDS TEAM REVIEW

|

||||||

|

This instruction is owned by the Safeguards team (David Forsythe, Kyla Guru)

|

||||||

|

```

|

||||||

|

|

||||||

|

So now we know exactly who at Anthropic owns the security boundary decisions and that it's governed by named individuals on a specific team. The instruction itself draws clear lines: authorized security testing is fine, destructive techniques and supply chain compromise are not.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## The Full Tool Registry - 40+ Tools

|

||||||

|

|

||||||

|

Claude Code's tool system lives in [`tools/`](https://github.com/kuberwastaken/claude-code/tree/main/src-rust/crates/tools/src).Here's the complete list:

|

||||||

|

|

||||||

|

| Tool | What It Does |

|

||||||

|

|------|-------------|

|

||||||

|

| **AgentTool** | Spawn child agents/subagents |

|

||||||

|

| **BashTool** / **PowerShellTool** | Shell execution (with optional sandboxing) |

|

||||||

|

| **FileReadTool** / **FileEditTool** / **FileWriteTool** | File operations |

|

||||||

|

| **GlobTool** / **GrepTool** | File search (uses native `bfs`/`ugrep` when available) |

|

||||||

|

| **WebFetchTool** / **WebSearchTool** / **WebBrowserTool** | Web access |

|

||||||

|

| **NotebookEditTool** | Jupyter notebook editing |

|

||||||

|

| **SkillTool** | Invoke user-defined skills |

|

||||||

|

| **REPLTool** | Interactive VM shell (bare mode) |

|

||||||

|

| **LSPTool** | Language Server Protocol communication |

|

||||||

|

| **AskUserQuestionTool** | Prompt user for input |

|

||||||

|

| **EnterPlanModeTool** / **ExitPlanModeV2Tool** | Plan mode control |

|

||||||

|

| **BriefTool** | Upload/summarize files to claude.ai |

|

||||||

|

| **SendMessageTool** / **TeamCreateTool** / **TeamDeleteTool** | Agent swarm management |

|

||||||

|

| **TaskCreateTool** / **TaskGetTool** / **TaskListTool** / **TaskUpdateTool** / **TaskOutputTool** / **TaskStopTool** | Background task management |

|

||||||

|

| **TodoWriteTool** | Write todos (legacy) |

|

||||||

|

| **ListMcpResourcesTool** / **ReadMcpResourceTool** | MCP resource access |

|

||||||

|

| **SleepTool** | Async delays |

|

||||||

|

| **SnipTool** | History snippet extraction |

|

||||||

|

| **ToolSearchTool** | Tool discovery |

|

||||||

|

| **ListPeersTool** | List peer agents (UDS inbox) |

|

||||||

|

| **MonitorTool** | Monitor MCP servers |

|

||||||

|

| **EnterWorktreeTool** / **ExitWorktreeTool** | Git worktree management |

|

||||||

|

| **ScheduleCronTool** | Schedule cron jobs |

|

||||||

|

| **RemoteTriggerTool** | Trigger remote agents |

|

||||||

|

| **WorkflowTool** | Execute workflow scripts |

|

||||||

|

| **ConfigTool** | Modify settings (**internal only**) |

|

||||||

|

| **TungstenTool** | Advanced features (**internal only**) |

|

||||||

|

| **SendUserFile** / **PushNotification** / **SubscribePR** | KAIROS-exclusive tools |

|

||||||

|

|

||||||

|

Tools are registered via `getAllBaseTools()` and filtered by feature gates, user type, environment flags, and permission deny rules. There's a **tool schema cache** ([`toolSchemaCache.ts`](https://github.com/kuberwastaken/claude-code/blob/main/src-rust/crates/tools/src/lib.rs)) that caches JSON schemas for prompt efficiency.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## The Permission and Security System

|

||||||

|

|

||||||

|

Claude Code's permission system in [`tools/permissions/`](https://github.com/kuberwastaken/claude-code/tree/main/src-rust/crates/core/src) is far more sophisticated than "allow/deny":

|

||||||

|

|

||||||

|

**Permission Modes**: `default` (interactive prompts), `auto` (ML-based auto-approval via transcript classifier), `bypass` (skip checks), `yolo` (deny all - ironically named)

|

||||||

|

|

||||||

|

**Risk Classification**: Every tool action is classified as **LOW**, **MEDIUM**, or **HIGH** risk. There's a **YOLO classifier** - a fast ML-based permission decision system that decides automatically.

|

||||||

|

|

||||||

|

**Protected Files**: `.gitconfig`, `.bashrc`, `.zshrc`, `.mcp.json`, `.claude.json` and others are guarded from automatic editing.

|

||||||

|

|

||||||

|

**Path Traversal Prevention**: URL-encoded traversals, Unicode normalization attacks, backslash injection, case-insensitive path manipulation - all handled.

|

||||||

|

|

||||||

|

**Permission Explainer**: A separate LLM call explains tool risks to the user before they approve. When Claude says "this command will modify your git config" - that explanation is itself generated by Claude.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Hidden Beta Headers and Unreleased API Features

|

||||||

|

|

||||||

|

The [`constants/betas.ts`](https://github.com/kuberwastaken/claude-code/blob/main/src-rust/crates/api/src/lib.rs) file reveals every beta feature Claude Code negotiates with the API:

|

||||||

|

|

||||||

|

```typescript

|

||||||

|

'interleaved-thinking-2025-05-14' // Extended thinking

|

||||||

|

'context-1m-2025-08-07' // 1M token context window

|

||||||

|

'structured-outputs-2025-12-15' // Structured output format

|

||||||

|

'web-search-2025-03-05' // Web search

|

||||||

|

'advanced-tool-use-2025-11-20' // Advanced tool use

|

||||||

|

'effort-2025-11-24' // Effort level control

|

||||||

|

'task-budgets-2026-03-13' // Task budget management

|

||||||

|

'prompt-caching-scope-2026-01-05' // Prompt cache scoping

|

||||||

|

'fast-mode-2026-02-01' // Fast mode (Penguin)

|

||||||

|

'redact-thinking-2026-02-12' // Redacted thinking

|

||||||

|

'token-efficient-tools-2026-03-28' // Token-efficient tool schemas

|

||||||

|

'afk-mode-2026-01-31' // AFK mode

|

||||||

|

'cli-internal-2026-02-09' // Internal-only (ant)

|

||||||

|

'advisor-tool-2026-03-01' // Advisor tool

|

||||||

|

'summarize-connector-text-2026-03-13' // Connector text summarization

|

||||||

|

```

|

||||||

|

|

||||||

|

`redact-thinking`, `afk-mode`, and `advisor-tool` are also not released.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Feature Gating - Internal vs. External Builds

|

||||||

|

|

||||||

|

This is one of the most architecturally interesting parts of the codebase.

|

||||||

|

|

||||||

|

Claude Code uses **compile-time feature flags** via Bun's `feature()` function from `bun:bundle`. The bundler **constant-folds** these and **dead-code-eliminates** the gated branches from external builds. The complete list of known flags:

|

||||||

|

|

||||||

|

| Flag | What It Gates |

|

||||||

|

|------|--------------|

|

||||||

|

| `PROACTIVE` / `KAIROS` | Always-on assistant mode |

|

||||||

|

| `KAIROS_BRIEF` | Brief command |

|

||||||

|

| `BRIDGE_MODE` | Remote control via claude.ai |

|

||||||

|

| `DAEMON` | Background daemon mode |

|

||||||

|

| `VOICE_MODE` | Voice input |

|

||||||

|

| `WORKFLOW_SCRIPTS` | Workflow automation |

|

||||||

|

| `COORDINATOR_MODE` | Multi-agent orchestration |

|

||||||

|

| `TRANSCRIPT_CLASSIFIER` | AFK mode (ML auto-approval) |

|

||||||

|

| `BUDDY` | Companion pet system |

|

||||||

|

| `NATIVE_CLIENT_ATTESTATION` | Client attestation |

|

||||||

|

| `HISTORY_SNIP` | History snipping |

|

||||||

|

| `EXPERIMENTAL_SKILL_SEARCH` | Skill discovery |

|

||||||

|

|

||||||

|

Additionally, `USER_TYPE === 'ant'` gates Anthropic-internal features: staging API access (`claude-ai.staging.ant.dev`), internal beta headers, Undercover mode, the `/security-review` command, `ConfigTool`, `TungstenTool`, and debug prompt dumping to `~/.config/claude/dump-prompts/`.

|

||||||

|

|

||||||

|

**GrowthBook** handles runtime feature gating with aggressively cached values. Feature flags prefixed with `tengu_` control everything from fast mode to memory consolidation. Many checks use `getFeatureValue_CACHED_MAY_BE_STALE()` to avoid blocking the main loop - stale data is considered acceptable for feature gates.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Other Notable Findings

|

||||||

|

|

||||||

|

### The Upstream Proxy

|

||||||

|

The [`upstreamproxy/`](https://github.com/kuberwastaken/claude-code/tree/main/src-rust/crates/bridge/src) directory contains a container-aware proxy relay that uses **`prctl(PR_SET_DUMPABLE, 0)`** to prevent same-UID ptrace of heap memory. It reads session tokens from `/run/ccr/session_token` in CCR containers, downloads CA certificates, and starts a local CONNECT→WebSocket relay. Anthropic API, GitHub, npmjs.org, and pypi.org are explicitly excluded from proxying.

|

||||||

|

|

||||||

|

### Bridge Mode

|

||||||

|

A JWT-authenticated bridge system in [`bridge/`](https://github.com/kuberwastaken/claude-code/tree/main/src-rust/crates/bridge/src) for integrating with claude.ai. Supports work modes: `'single-session'` | `'worktree'` | `'same-dir'`. Includes trusted device tokens for elevated security tiers.

|

||||||

|

|

||||||

|

### Model Codenames in Migrations

|

||||||

|

The [`migrations/`](https://github.com/kuberwastaken/claude-code/tree/main/src-rust/crates/core/src) directory reveals the internal codename history:

|

||||||

|

- `migrateFennecToOpus` - **"Fennec"** (the fox) was an Opus codename

|

||||||

|

- `migrateSonnet1mToSonnet45` - Sonnet with 1M context became Sonnet 4.5

|

||||||

|

- `migrateSonnet45ToSonnet46` - Sonnet 4.5 → Sonnet 4.6

|

||||||

|

- `resetProToOpusDefault` - Pro users were reset to Opus at some point

|

||||||

|

|

||||||

|

### Attribution Header

|

||||||

|

Every API request includes:

|

||||||

|

```

|

||||||

|

x-anthropic-billing-header: cc_version={VERSION}.{FINGERPRINT};

|

||||||

|

cc_entrypoint={ENTRYPOINT}; cch={ATTESTATION_PLACEHOLDER}; cc_workload={WORKLOAD};

|

||||||

|

```

|

||||||

|

The `NATIVE_CLIENT_ATTESTATION` feature lets Bun's HTTP stack overwrite the `cch=00000` placeholder with a computed hash - essentially a client authenticity check so Anthropic can verify the request came from a real Claude Code install.

|

||||||

|

|

||||||

|

### Computer Use - "Chicago"

|

||||||

|

Claude Code includes a full Computer Use implementation, internally codenamed **"Chicago"**, built on `@ant/computer-use-mcp`. It provides screenshot capture, click/keyboard input, and coordinate transformation. Gated to Max/Pro subscriptions (with an ant bypass for internal users).

|

||||||

|

|

||||||

|

### Pricing

|

||||||

|

For anyone wondering - all pricing in [`utils/modelCost.ts`](https://github.com/kuberwastaken/claude-code/blob/main/src-rust/crates/api/src/lib.rs) matches [Anthropic's public pricing](https://docs.anthropic.com/en/docs/about-claude/models) exactly. Nothing newsworthy there.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Final Thoughts

|

||||||

|

|

||||||

|

This is, without exaggeration, one of the most comprehensive looks we've ever gotten at how *the* production AI coding assistant works under the hood. Through the actual source code.

|

||||||

|

|

||||||

|

A few things stand out:

|

||||||

|

|

||||||

|

**The engineering is genuinely impressive.** This isn't a weekend project wrapped in a CLI. The multi-agent coordination, the dream system, the three-gate trigger architecture, the compile-time feature elimination - these are deeply considered systems.

|

||||||

|

|

||||||

|

**There's a LOT more coming.** KAIROS (always-on Claude), ULTRAPLAN (30-minute remote planning), the Buddy companion, coordinator mode, agent swarms, workflow scripts - the codebase is significantly ahead of the public release. Most of these are feature-gated and invisible in external builds.

|

||||||

|

|

||||||

|

**The internal culture shows.** Animal codenames (Tengu, Fennec, Capybara), playful feature names (Penguin Mode, Dream System), a Tamagotchi pet system with gacha mechanics. Some people at Anthropic is having fun.

|

||||||

|

|

||||||

|

If there's one takeaway this has, it's that security is hard. But `.npmignore` is harder, apparently :P

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

A writeup by [Kuber Mehta](https://kuber.studio/)

|

||||||

BIN

public/claude-files.png

Normal file

BIN

public/claude-files.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 721 KiB |

BIN

public/leak-tweet.png

Normal file

BIN

public/leak-tweet.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 407 KiB |

334

spec/00_overview.md

Normal file

334

spec/00_overview.md

Normal file

|

|

@ -0,0 +1,334 @@

|

||||||

|

# Claude Code — Master Architecture Overview

|

||||||

|

|

||||||

|

> **Repository:** `X:\Bigger-Projects\Claude-Code`

|

||||||

|

> **Primary Language:** TypeScript/TSX (~1,902 files, ~800K+ LOC)

|

||||||

|

> **Secondary Language:** Rust (~47 files, in-progress port)

|

||||||

|

> **Bundler:** Bun

|

||||||

|

> **UI Framework:** Custom Ink (React reconciler for terminal)

|

||||||

|

> **Runtime Target:** Node.js / Bun CLI

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## 1. What Is Claude Code?

|

||||||

|

|

||||||

|

Claude Code is an AI-powered CLI tool and coding assistant. It is a full-featured interactive terminal application that:

|

||||||

|

|

||||||

|

- Embeds a Claude AI model as an agentic coding assistant

|

||||||

|

- Runs in the terminal using a custom React-based TUI (Terminal User Interface)

|

||||||

|

- Executes tools (file read/write, bash, grep, web search, etc.) with user permission

|

||||||

|

- Supports multi-agent task delegation, background agents, and swarm mode

|

||||||

|

- Integrates with IDEs (VS Code, JetBrains) via direct-connect bridge

|

||||||

|

- Supports remote sessions via WebSocket/SSE transports

|

||||||

|

- Has a plugin/skills marketplace

|

||||||

|

- Includes voice input (speech-to-text)

|

||||||

|

- Features a companion "buddy" system (Tamagotchi-style)

|

||||||

|

- Syncs sessions to the cloud via the bridge protocol

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## 2. Repository Structure

|

||||||

|

|

||||||

|

```

|

||||||

|

Claude-Code/

|

||||||

|

├── src/ # Main TypeScript/TSX source (34 MB, ~1,902 files)

|

||||||

|

│ ├── main.tsx # PRIMARY ENTRY POINT (4,683 lines)

|

||||||

|

│ ├── replLauncher.tsx # REPL mode launcher

|

||||||

|

│ ├── query.ts # Main query/turn execution engine (69KB)

|

||||||

|

│ ├── QueryEngine.ts # Query engine class (46KB)

|

||||||

|

│ ├── Tool.ts # Tool base framework (30KB)

|

||||||

|

│ ├── Task.ts # Task definitions

|

||||||

|

│ ├── commands.ts # Command registry (25KB)

|

||||||

|

│ ├── context.ts # Context management

|

||||||

|

│ ├── cost-tracker.ts # Cost tracking (11KB)

|

||||||

|

│ ├── costHook.ts # Cost hooks

|

||||||

|

│ ├── history.ts # Session history (14KB)

|

||||||

|

│ ├── dialogLaunchers.tsx # Dialog launchers (23KB)

|

||||||

|

│ ├── interactiveHelpers.tsx # Interactive UI helpers (57KB)

|

||||||

|

│ ├── projectOnboardingState.ts # Project onboarding state

|

||||||

|

│ ├── setup.ts # Initialization (21KB)

|

||||||

|

│ ├── tasks.ts # Task management

|

||||||

|

│ ├── tools.ts # Tools registry (17KB)

|

||||||

|

│ ├── ink.ts # Ink export shim

|

||||||

|

│ │

|

||||||

|

│ ├── assistant/ # Assistant session history

|

||||||

|

│ ├── bootstrap/ # Bootstrap/state

|

||||||

|

│ ├── bridge/ # Bridge protocol (31 files)

|

||||||

|

│ ├── buddy/ # Companion pet system (6 files)

|

||||||

|

│ ├── cli/ # CLI framework & transports (19 files)

|

||||||

|

│ ├── commands/ # 87 slash commands (207 files)

|

||||||

|

│ ├── components/ # React/Ink UI components (389 files, 32 subdirs)

|

||||||

|

│ ├── constants/ # Constants & config values (21 files)

|

||||||

|

│ ├── context/ # React context providers (9 files)

|

||||||

|

│ ├── coordinator/ # Coordinator mode logic

|

||||||

|

│ ├── entrypoints/ # Multiple entry points (8 files)

|

||||||

|

│ ├── hooks/ # React hooks (104 files)

|

||||||

|

│ ├── ink/ # Custom Ink terminal framework (96 files)

|

||||||

|

│ ├── keybindings/ # Keyboard shortcut system (14 files)

|

||||||

|

│ ├── memdir/ # Memory directory system (8 files)

|

||||||

|

│ ├── migrations/ # Settings migrations (11 files)

|

||||||

|

│ ├── moreright/ # useMoreRight hook

|

||||||

|

│ ├── native-ts/ # Native TypeScript bindings (4 files)

|

||||||

|

│ ├── outputStyles/ # Output style loader

|

||||||

|

│ ├── plugins/ # Plugin system (2 files)

|

||||||

|

│ ├── query/ # Query helpers (4 files)

|

||||||

|

│ ├── remote/ # Remote session management (4 files)

|

||||||

|

│ ├── schemas/ # Zod/JSON schemas

|

||||||

|

│ ├── screens/ # Top-level screen layouts (3 files)

|

||||||

|

│ ├── server/ # Direct-connect server (3 files)

|

||||||

|

│ ├── services/ # Business logic services (130 files)

|

||||||

|

│ ├── skills/ # Claude skills/slash commands (20 files)

|

||||||

|

│ ├── tools/ # Tool implementations (40+ tools, 184 files)

|

||||||

|

│ ├── types/ # TypeScript type definitions

|

||||||

|

│ ├── utils/ # Utility functions (~564 files)

|

||||||

|

│ └── voice/ # Voice integration

|

||||||

|

│

|

||||||

|

├── claude-code-rust/ # Rust port (in-progress, 47 files)

|

||||||

|

│ ├── Cargo.toml # Workspace manifest

|

||||||

|

│ ├── tools/ # 27 files — tool implementations

|

||||||

|

│ ├── query/ # 5 files — query system

|

||||||

|

│ ├── cli/ # 3 files — CLI framework

|

||||||

|

│ ├── api/ # 2 files — API bindings

|

||||||

|

│ ├── bridge/ # 2 files — bridge protocol

|

||||||

|

│ ├── commands/ # 2 files — command system

|

||||||

|

│ ├── core/ # 2 files — core utilities

|

||||||

|

│ ├── mcp/ # 2 files — MCP integration

|

||||||

|

│ └── tui/ # 2 files — terminal UI

|

||||||

|

│

|

||||||

|

├── public/ # Static assets

|

||||||

|

├── README.md # Main documentation (27KB)

|

||||||

|

└── .git/ # Git metadata

|

||||||

|

```

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## 3. High-Level Architecture

|

||||||

|

|

||||||

|

```

|

||||||

|

┌─────────────────────────────────────────────────────────────────┐

|

||||||

|

│ USER INTERFACE │

|

||||||

|

│ Terminal (Ink TUI) ←→ React Components ←→ Hooks ←→ Context │

|

||||||

|

└────────────────────────────┬────────────────────────────────────┘

|

||||||

|

│

|

||||||

|

┌────────────────────────────▼────────────────────────────────────┐

|

||||||

|

│ MAIN APPLICATION │

|

||||||

|

│ main.tsx → REPL.tsx → PromptInput → MessageList │

|

||||||

|

│ Commands (87) ←→ Command Registry ←→ Plugin System │

|

||||||

|

└────────────────────────────┬────────────────────────────────────┘

|

||||||

|

│

|

||||||

|

┌────────────────────────────▼────────────────────────────────────┐

|

||||||

|

│ QUERY ENGINE │

|

||||||

|

│ query.ts → QueryEngine.ts → Tool execution → Response handling │

|

||||||

|

│ Token budget → Stop hooks → Compact → History │

|

||||||

|

└────────────────────────────┬────────────────────────────────────┘

|

||||||

|

│

|

||||||

|

┌────────────────────────────▼────────────────────────────────────┐

|

||||||

|

│ TOOL SYSTEM (40+ tools) │

|

||||||

|

│ BashTool, FileReadTool, FileEditTool, FileWriteTool │

|

||||||

|

│ GlobTool, GrepTool, WebFetchTool, WebSearchTool │

|

||||||

|

│ AgentTool, TaskCreateTool, MCPTool, SkillTool, ... │

|

||||||

|

└────────────────────────────┬────────────────────────────────────┘

|

||||||

|

│

|

||||||

|

┌────────────────────────────▼────────────────────────────────────┐

|

||||||

|

│ SERVICES LAYER │

|

||||||

|

│ API Client (claude.ts) → Analytics → SessionMemory │

|

||||||

|

│ AutoDream → Compact → RateLimit → MCP servers │

|

||||||

|

└────────────────────────────┬────────────────────────────────────┘

|

||||||

|

│

|

||||||

|

┌────────────────────────────▼────────────────────────────────────┐

|

||||||

|

│ TRANSPORT LAYER │

|

||||||

|

│ CLI (local) / Bridge (remote) / IDE direct-connect │

|

||||||

|

│ SSETransport | WebSocketTransport | HybridTransport │

|

||||||

|

└─────────────────────────────────────────────────────────────────┘

|

||||||

|

```

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## 4. Core Subsystems

|

||||||

|

|

||||||

|

### 4.1 Query / Turn Execution (`query.ts`, `QueryEngine.ts`)

|

||||||

|

The core loop that:

|

||||||

|

1. Takes user input

|

||||||

|

2. Builds the API request (system prompt + history + tools)

|

||||||

|

3. Streams the response from Claude API

|

||||||

|

4. Handles tool use (executes tools, feeds results back)

|

||||||

|

5. Manages token budget and context compaction

|

||||||

|

6. Tracks cost

|

||||||

|

|

||||||

|

### 4.2 Tool Framework (`Tool.ts`, `tools/`)

|

||||||

|

- Base `Tool` abstract class/interface

|

||||||

|

- Input schema validation (Zod)

|

||||||

|

- Permission system (each tool declares required permissions)

|

||||||

|

- 40+ tool implementations

|

||||||

|

- Sandboxing for dangerous tools

|

||||||

|

|

||||||

|

### 4.3 Terminal UI (`ink/`, `components/`)

|

||||||

|

- Custom React reconciler that renders to terminal

|

||||||

|

- Layout engine based on Yoga (flexbox for terminal)

|

||||||

|

- Event system (keyboard, mouse, focus)

|

||||||

|

- ANSI/CSI/escape sequence processing

|

||||||

|

- Components: Messages, PromptInput, Spinner, Dialogs, etc.

|

||||||

|

|

||||||

|

### 4.4 Commands System (`commands/`, `commands.ts`)

|

||||||

|

- 87 slash commands (e.g., `/compact`, `/diff`, `/plan`, `/mcp`)

|

||||||

|

- Plugin-contributed commands

|

||||||

|

- Command registry with fuzzy matching

|

||||||

|

- Keybinding integration

|

||||||

|

|

||||||

|

### 4.5 Bridge Protocol (`bridge/`)

|

||||||

|

- Enables remote/cloud-synced sessions

|

||||||

|

- JWT-authenticated WebSocket/SSE connection to cloud backend servers

|

||||||

|

- REPL bridge for IDE integration

|

||||||

|

- Message polling, flush gates, session runners

|

||||||

|

|

||||||

|

### 4.6 Multi-Agent System (`tools/AgentTool.ts`, `components/agents/`)

|

||||||

|

- Spawn sub-agents as isolated Claude instances

|

||||||

|

- Background task execution

|

||||||

|

- Coordinator mode (orchestrate multiple agents)

|

||||||

|

- Swarm mode (parallel worker agents)

|

||||||

|

- Team system for collaborative agents

|

||||||

|

|

||||||

|

### 4.7 Memory System (`memdir/`, `services/SessionMemory/`, `services/autoDream/`)

|

||||||

|

- Short-term: session history

|

||||||

|

- Long-term: memdir (markdown files in `~/.claude/memory/`)

|

||||||

|

- Auto-consolidation: "dream" service consolidates memories during idle

|

||||||

|

- Memory scanning/relevance scoring for context injection

|

||||||

|

|

||||||

|

### 4.8 MCP Integration (`tools/MCPTool.ts`, `components/mcp/`, `entrypoints/mcp.ts`)

|

||||||

|

- Model Context Protocol server support

|

||||||

|

- Dynamic tool registration from MCP servers

|

||||||

|

- Resource management

|

||||||

|

- Elicitation dialog support

|

||||||

|

|

||||||

|

### 4.9 Plugin/Skills System (`plugins/`, `skills/`, `commands/plugin/`)

|

||||||

|

- Built-in plugins

|

||||||

|

- Marketplace for community plugins

|

||||||

|

- Skills: user-invocable slash command macros

|

||||||

|

- Plugin trust model with approval flow

|

||||||

|

|

||||||

|

### 4.10 IDE Integration (`bridge/`, `hooks/useIDEIntegration.tsx`)

|

||||||

|

- VS Code / JetBrains extensions connect via direct-connect

|

||||||

|

- Live diff viewing in IDE

|

||||||

|

- File selection sync (IDE → Claude)

|

||||||

|

- Status indicator in IDE

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## 5. Data Flow: A User Turn

|

||||||

|

|

||||||

|

```

|

||||||

|

1. User types in PromptInput

|

||||||

|

2. Input submitted → useCommandQueue processes

|

||||||

|

3. If slash command: dispatched to command handler

|

||||||

|

4. If regular prompt: sent to query.ts runQuery()

|

||||||

|

5. QueryEngine builds API request:

|

||||||

|

- System prompt (from constants/prompts.ts + CLAUDE.md)

|

||||||

|

- Message history (from history.ts)

|

||||||

|

- Available tools (filtered by permission)

|

||||||

|

- Token budget constraints

|

||||||

|

6. Stream response from the Claude API (services/api/claude.ts)

|

||||||

|

7. For each content block:

|

||||||

|

- text → render AssistantTextMessage

|

||||||

|

- thinking → render AssistantThinkingMessage

|

||||||

|

- tool_use → execute tool, show permission dialog if needed

|

||||||

|

8. Tool results fed back into next API request

|

||||||

|

9. Loop until stop condition (no more tool use, stop hook, budget exceeded)

|

||||||

|

10. Final response rendered, history updated, cost tracked

|

||||||

|

```

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## 6. Key Files by Importance

|

||||||

|

|

||||||

|

| Rank | File | Size | Role |

|

||||||

|

|------|------|------|------|

|

||||||

|

| 1 | `src/main.tsx` | 4,683 lines | Primary entry point, app initialization |

|

||||||

|

| 2 | `src/query.ts` | 69KB | Main query execution loop |

|

||||||

|

| 3 | `src/QueryEngine.ts` | 46KB | Query engine class |

|

||||||

|

| 4 | `src/interactiveHelpers.tsx` | 57KB | Interactive UI helpers |

|

||||||

|

| 5 | `src/Tool.ts` | 30KB | Tool base framework |

|

||||||

|

| 6 | `src/commands.ts` | 25KB | Command registry |

|

||||||

|

| 7 | `src/dialogLaunchers.tsx` | 23KB | Dialog launch system |

|

||||||

|

| 8 | `src/setup.ts` | 21KB | Initialization |

|

||||||

|

| 9 | `src/tools.ts` | 17KB | Tools registry |

|

||||||

|

| 10 | `src/history.ts` | 14KB | Session history |

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## 7. Permission Model

|

||||||

|

|

||||||

|

Claude Code uses a layered permission system:

|

||||||

|

|

||||||

|

1. **Automatic** — Read-only operations, info queries

|

||||||

|

2. **Ask Once** — Prompt user, remember for session

|

||||||

|

3. **Ask Always** — Prompt user every time

|

||||||

|

4. **Deny** — Block completely

|

||||||

|

|

||||||

|

Permission rules are stored in settings (global `~/.claude/settings.json`, project `.claude/settings.json`) and can be configured with patterns.

|

||||||

|

|

||||||

|

Permission categories:

|

||||||

|

- `Bash` — Shell command execution

|

||||||

|

- `FileRead` — Reading files/directories

|

||||||

|

- `FileEdit` — Editing existing files

|

||||||

|

- `FileWrite` — Creating new files

|

||||||

|

- `WebFetch` — HTTP requests

|

||||||

|

- `MCP` — MCP tool calls

|

||||||

|

- `Sandbox` — Sandboxed execution

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## 8. Settings System

|

||||||

|

|

||||||

|

Layered settings (in priority order):

|

||||||

|

1. **Managed** — Enterprise/managed settings (read-only)

|

||||||

|

2. **Local project** — `.claude/settings.local.json` (gitignored)

|

||||||

|

3. **Project** — `.claude/settings.json` (shared)

|

||||||

|

4. **Global** — `~/.claude/settings.json`

|

||||||

|

|

||||||

|

Settings include: model selection, permission rules, API key, theme, keybindings, MCP server configurations, beta features.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## 9. Model Support

|

||||||

|

|

||||||

|

Based on migration files, the model evolution:

|

||||||

|

- `claude-3-sonnet` → `claude-sonnet-1m` → `claude-sonnet-4-5` → `claude-sonnet-4-6`

|

||||||

|

- `claude-3-opus` → `claude-opus-1m` → `claude-opus` → (various)

|

||||||

|

- `claude-3-5-haiku` → (current)

|

||||||

|

- `claude-haiku-4-5` (current haiku)

|

||||||

|

|

||||||

|

Current defaults (as of source): `claude-sonnet-4-6` and `claude-opus-4-6`

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## 10. Analytics & Telemetry

|

||||||

|

|

||||||

|

- **First-party logging** — Session events to the backend (`services/analytics/`)

|

||||||

|

- **Datadog** — Performance metrics

|

||||||

|

- **Growthbook** — Feature flags / A/B testing

|

||||||

|

- **Opt-out** — `services/api/metricsOptOut.ts` handles user opt-out

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## 11. Spec Document Index

|

||||||

|

|

||||||

|

| File | Contents |

|

||||||

|

|------|----------|

|

||||||

|

| `00_overview.md` | This file — master architecture overview |

|

||||||

|

| `01_core_entry_query.md` | Entry points, query system, history, cost tracking |

|

||||||

|

| `02_commands.md` | All 87 slash commands |

|

||||||

|

| `03_tools.md` | All 40+ tool implementations |

|

||||||

|

| `04_components_core_messages.md` | Top-level components and message components |

|

||||||

|

| `05_components_agents_permissions_design.md` | Agents, permissions, design system, feature modules |

|

||||||

|

| `06_services_context_state.md` | Services, context providers, state, screens, server |

|

||||||

|

| `07_hooks.md` | All React hooks |

|

||||||

|

| `08_ink_terminal.md` | Ink terminal rendering framework |

|

||||||

|

| `09_bridge_cli_remote.md` | Bridge protocol, CLI framework, remote sessions |

|

||||||

|

| `10_utils.md` | All utility functions (~564 files) |

|

||||||

|

| `11_special_systems.md` | Buddy, memory, keybindings, skills, voice, plugins |

|

||||||

|

| `12_constants_types.md` | All constants, types, and configuration |

|

||||||

|

| `13_rust_codebase.md` | Rust port/rewrite |

|

||||||

|

| `INDEX.md` | Quick-reference index |

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

*Generated from source analysis of the Claude Code codebase. ~1,902 TypeScript/TSX files, ~800K+ lines of code.*

|

||||||

1925

spec/01_core_entry_query.md

Normal file

1925

spec/01_core_entry_query.md

Normal file

File diff suppressed because it is too large

Load diff

2065

spec/02_commands.md

Normal file

2065

spec/02_commands.md

Normal file

File diff suppressed because it is too large

Load diff

2171

spec/03_tools.md

Normal file

2171

spec/03_tools.md

Normal file

File diff suppressed because it is too large

Load diff

2586

spec/04_components_core_messages.md

Normal file

2586

spec/04_components_core_messages.md

Normal file

File diff suppressed because it is too large

Load diff

1952

spec/05_components_agents_permissions_design.md

Normal file

1952

spec/05_components_agents_permissions_design.md

Normal file

File diff suppressed because it is too large

Load diff

2631

spec/06_services_context_state.md

Normal file

2631

spec/06_services_context_state.md

Normal file

File diff suppressed because it is too large

Load diff

2136

spec/07_hooks.md

Normal file

2136

spec/07_hooks.md

Normal file

File diff suppressed because it is too large

Load diff

1608

spec/08_ink_terminal.md

Normal file

1608

spec/08_ink_terminal.md

Normal file

File diff suppressed because it is too large

Load diff

2233

spec/09_bridge_cli_remote.md

Normal file

2233

spec/09_bridge_cli_remote.md

Normal file

File diff suppressed because it is too large

Load diff

2182

spec/10_utils.md

Normal file

2182

spec/10_utils.md

Normal file

File diff suppressed because it is too large

Load diff

1767

spec/11_special_systems.md

Normal file

1767

spec/11_special_systems.md

Normal file

File diff suppressed because it is too large

Load diff

1903

spec/12_constants_types.md

Normal file

1903

spec/12_constants_types.md

Normal file

File diff suppressed because it is too large

Load diff

1537

spec/13_rust_codebase.md

Normal file

1537

spec/13_rust_codebase.md

Normal file

File diff suppressed because it is too large

Load diff

122

spec/INDEX.md

Normal file

122

spec/INDEX.md

Normal file

|

|

@ -0,0 +1,122 @@

|

||||||

|

# Claude Code — Spec Index

|

||||||

|

|

||||||

|

> Quick-reference index across all spec documents.

|

||||||

|

> Total spec coverage: ~990 KB across 15 markdown files.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Spec Files

|

||||||

|

|

||||||

|

| # | File | Size | What's Inside |

|

||||||

|

|---|------|------|---------------|

|

||||||

|

| — | [00_overview.md](00_overview.md) | 16 KB | Master architecture, repo structure, data flow, permission model, settings layers |

|

||||||

|

| 01 | [01_core_entry_query.md](01_core_entry_query.md) | 73 KB | `main.tsx`, `query.ts`, `QueryEngine.ts`, entry points, history, cost tracking, token budget |

|

||||||

|

| 02 | [02_commands.md](02_commands.md) | 71 KB | All 100+ slash commands with args, options, and implementation |

|

||||||

|

| 03 | [03_tools.md](03_tools.md) | 67 KB | All 40+ tools: input schemas, permissions, outputs, shared utilities |

|

||||||

|

| 04 | [04_components_core_messages.md](04_components_core_messages.md) | 93 KB | 130 top-level UI components + all message rendering components |

|

||||||

|

| 05 | [05_components_agents_permissions_design.md](05_components_agents_permissions_design.md) | 64 KB | Agent creation wizard, permission dialogs, design system, PromptInput, Spinner |

|

||||||

|

| 06 | [06_services_context_state.md](06_services_context_state.md) | 95 KB | Analytics, API client, session memory, autoDream, compact, voice, contexts, state |

|

||||||

|

| 07 | [07_hooks.md](07_hooks.md) | 84 KB | All 104 React hooks with params, return types, and behavior |

|

||||||

|

| 08 | [08_ink_terminal.md](08_ink_terminal.md) | 78 KB | Custom terminal framework: React reconciler, Yoga layout, screen buffer, ANSI tokenizer |

|

||||||

|

| 09 | [09_bridge_cli_remote.md](09_bridge_cli_remote.md) | 75 KB | Bridge protocol, JWT auth, SSE/WebSocket/Hybrid transports, remote sessions |

|

||||||

|

| 10 | [10_utils.md](10_utils.md) | 60 KB | ~564 utility files organized by category |

|

||||||

|

| 11 | [11_special_systems.md](11_special_systems.md) | 64 KB | Buddy/Tamagotchi, memdir, keybindings, skills, voice, plugins, migrations |

|

||||||

|

| 12 | [12_constants_types.md](12_constants_types.md) | 83 KB | Every constant, type, OAuth config, system prompts, tool limits, beta headers |

|

||||||

|

| 13 | [13_rust_codebase.md](13_rust_codebase.md) | 63 KB | Complete Rust rewrite: all 9 crates, 33 tools, query loop, TUI, bridge |

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Quick Lookup

|

||||||

|

|

||||||

|

### "Where is X documented?"

|

||||||

|

|

||||||

|

| Topic | Spec File | Section |

|

||||||

|

|-------|-----------|---------|

|

||||||

|

| Main entry point (`main.tsx`) | 01 | §1 |

|

||||||

|

| Query/turn execution loop | 01 | §2–3 |

|

||||||

|

| Token budget & compaction | 01 | §token-budget |

|

||||||

|

| Tool base class & framework | 03 | §1 |

|

||||||

|

| BashTool | 03 | §BashTool |

|

||||||

|

| FileEditTool | 03 | §FileEditTool |

|

||||||

|

| AgentTool (sub-agents) | 03 | §AgentTool |

|

||||||

|

| WebSearchTool | 03 | §WebSearchTool |

|

||||||

|

| MCPTool | 03 | §MCPTool |

|

||||||

|

| All slash commands | 02 | §per-command |

|

||||||

|

| `/compact` command | 02 | §compact |

|

||||||

|

| `/mcp` command | 02 | §mcp |

|

||||||

|

| `/plan` command | 02 | §plan |

|

||||||

|

| Permission dialog system | 05 | §permissions |

|

||||||

|

| Permission rules (settings) | 05 | §rules |

|

||||||

|

| PromptInput component | 05 | §PromptInput |

|

||||||

|

| Message rendering | 04 | §messages |

|

||||||

|

| Spinner component | 05 | §Spinner |

|

||||||

|

| Agent creation wizard | 05 | §agents |

|

||||||

|

| Claude API client | 06 | §api/claude |

|

||||||

|

| Analytics / telemetry | 06 | §analytics |

|

||||||

|

| Session memory | 06 | §SessionMemory |

|

||||||

|

| AutoDream consolidation | 06 | §autoDream |

|

||||||

|

| Rate limiting | 06 | §claudeAiLimits |

|

||||||

|

| Context compaction | 06 | §compact |

|

||||||

|

| React contexts | 06 | §context |

|

||||||

|

| Bootstrap state (80+ fields) | 06 | §bootstrap |

|

||||||

|

| Coordinator mode | 06 | §coordinator |

|

||||||

|

| All React hooks | 07 | §per-hook |

|

||||||

|

| Ink reconciler | 08 | §reconciler |

|

||||||

|

| Yoga layout engine | 08 | §layout |

|

||||||

|

| Screen buffer / rendering | 08 | §screen |

|

||||||

|

| ANSI/CSI/ESC handling | 08 | §termio |

|

||||||

|

| Bridge protocol | 09 | §bridge |

|

||||||

|

| JWT authentication | 09 | §jwtUtils |

|

||||||

|

| SSE transport | 09 | §SSETransport |

|

||||||

|

| WebSocket transport | 09 | §WebSocketTransport |

|

||||||

|

| Remote sessions | 09 | §remote |

|

||||||

|

| Buddy/Tamagotchi | 11 | §buddy |

|

||||||

|

| Gacha mechanics (PRNG) | 11 | §buddy-gacha |

|

||||||

|

| Memory directory system | 11 | §memdir |

|

||||||

|

| Keybinding parser | 11 | §keybindings |

|

||||||

|

| Skills system | 11 | §skills |

|

||||||

|

| Voice / STT | 11 | §voice |

|

||||||

|

| Plugin system | 11 | §plugins |

|

||||||

|

| Model migration history | 11 | §migrations |

|

||||||

|

| All constants | 12 | §constants |

|